Today I learned about Logistic Regression and applied it to the S&P500 to predict tomorrow’s movement, up or down. Something that can be used for prediction markets.

Linear Regression attempts to quantify a specific value, like predicting the exact value of a stock each day.

Logistic Regression is a classification algorithm. It doesn’t care about exact numbers, only movement, up or down.

With a Bachelor in Finance, I was quite surprised to not have been exposed to this model. Very interesting and quite useful.

For this project, I used 5 years of data of SPY to train the model. I imported the necessary libraries and downloaded the data with yfinance.

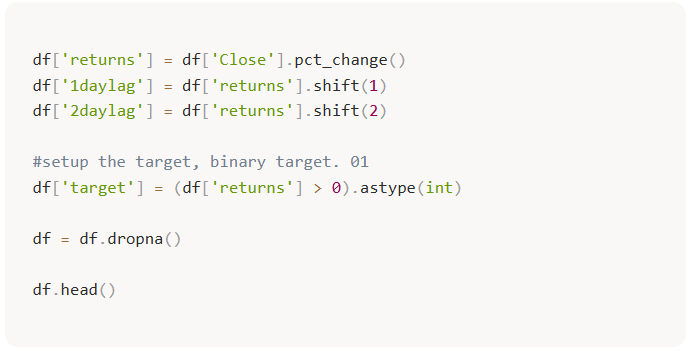

Afterwards, we needed to manipulate the dataframe a bit. We need our model inputs. For this project I decided to keep it simple and only included 2 lags: 1 day returns and 2 day returns.

The preprocessing steps

- Calculate Returns: we transform raw prices into daily percentage changes.

- Create Lags: we use shift(1) and shift(2) to create features based on the previous two days.

- Define the Target: we use astype(int) to create a binary target: 1 if the price went up, and 0 if it stayed flat or dropped.

- Lookahead Bias: by using lags as features (X), we ensure the model only sees what happened yesterday to predict tomorrow. If the model sees today’s data to predict today’s price, it’s not learning, it’s cheating.

Afterwards, we need to separate the data between training and testing. I used an 80/20 chronological split: the first 80% of observations for training, and the final 20% for testing. This keeps the test period in the future relative to the training period, which matters when working with market data. More training data can help, but only if the relationships in the data remain somewhat stable. With markets, that assumption is always questionable.

.jpg)

We are now ready to train the model. Using the sklearn library we initially imported, we use .fit() to train the model.

.png)

Here I learned about the C parameter in the model. Regularization. It is essentially the level of strictness of the model. I kept the C relatively high for this project, so overfitting might be present.

.jpg)

.jpg)

In the next step we used the model on our test data. The results were as expected.

.jpg)

Looking at the summary statistics of the model, we can see that neither of the indicators gives strong evidence of being useful for predicting the next day’s price. This suggests the model was not finding much signal in the lagged returns. Most of its apparent accuracy came from leaning into the market’s upward bias. A p-value around 0.3 gives me no strong evidence that the feature is useful here.

.jpg)

Flaws and biases

- The model predicted the market would go “Up” 261 times out of 263.

- The problem: it only predicted “Down” 2 times.

- The bullish trap: because the S&P 500 tends to go up over time, the model learned that by simply saying “Up” constantly, it can maintain a 57% accuracy rate without actually understanding the market’s randomness.

- The 70% confidence threshold: when I raised the requirement to a 70% probability, the accuracy plummeted to 42.5%. This is the most honest part of the results. It shows that when the model is “sure” of itself, its confidence is not reliable. This does not automatically mean the opposite trade would work, but it does show that the model’s strongest signals were not trustworthy.

.jpg)

Conclusion

A good lesson between accuracy and truth. While the model had an accuracy above 50%, the confusion matrix revealed a strong bullish bias. The model didn’t find a pattern, it just found a trend.

In the future, looking beyond price lags and including signals such as volatility, moving averages, and volume may give the model better inputs. But better features would still need to be tested carefully out of sample, because more inputs can also create more ways to overfit.

This was the first of many projects coming on the way. I am not chasing fame. I want a diary of my own for learning and maybe inspire others to do the same. I don’t call myself a quant or want to be one. Each failed model is just another iteration.

Enjoy the walk.